Blog 4: Time Complexity Explained Simply (Big-O Made Easy)

Time Complexity helps you measure how fast your code runs as input grows. Learn Big-O notation, understand O(1), O(n), O(log n), and O(n²) with simple examples and diagrams. Write efficient code and crack coding interviews. #DSA #BigO #TimeComplexity #Algorithms #Coding #Tech

🧠 Introduction

You wrote a solution. It works. ✅

But here’s the real question:

Will it still work efficiently when input becomes very large?

Handling 10 inputs is easy.

Handling 10 lakh inputs is where real skill shows.

👉 This is why we need Time Complexity.

⚡ What is Time Complexity?

Time Complexity measures:

How the execution time of an algorithm grows with input size

👉 Important:

- It does NOT measure exact seconds

- It measures growth pattern

🧊 Real-Life Analogy

Imagine searching for a name in a phonebook 📖

Case 1: Random pages

→ Check one by one → slow (O(n))

Case 2: Sorted book

→ Open middle → faster (O(log n))

👉 Same task, different efficiency

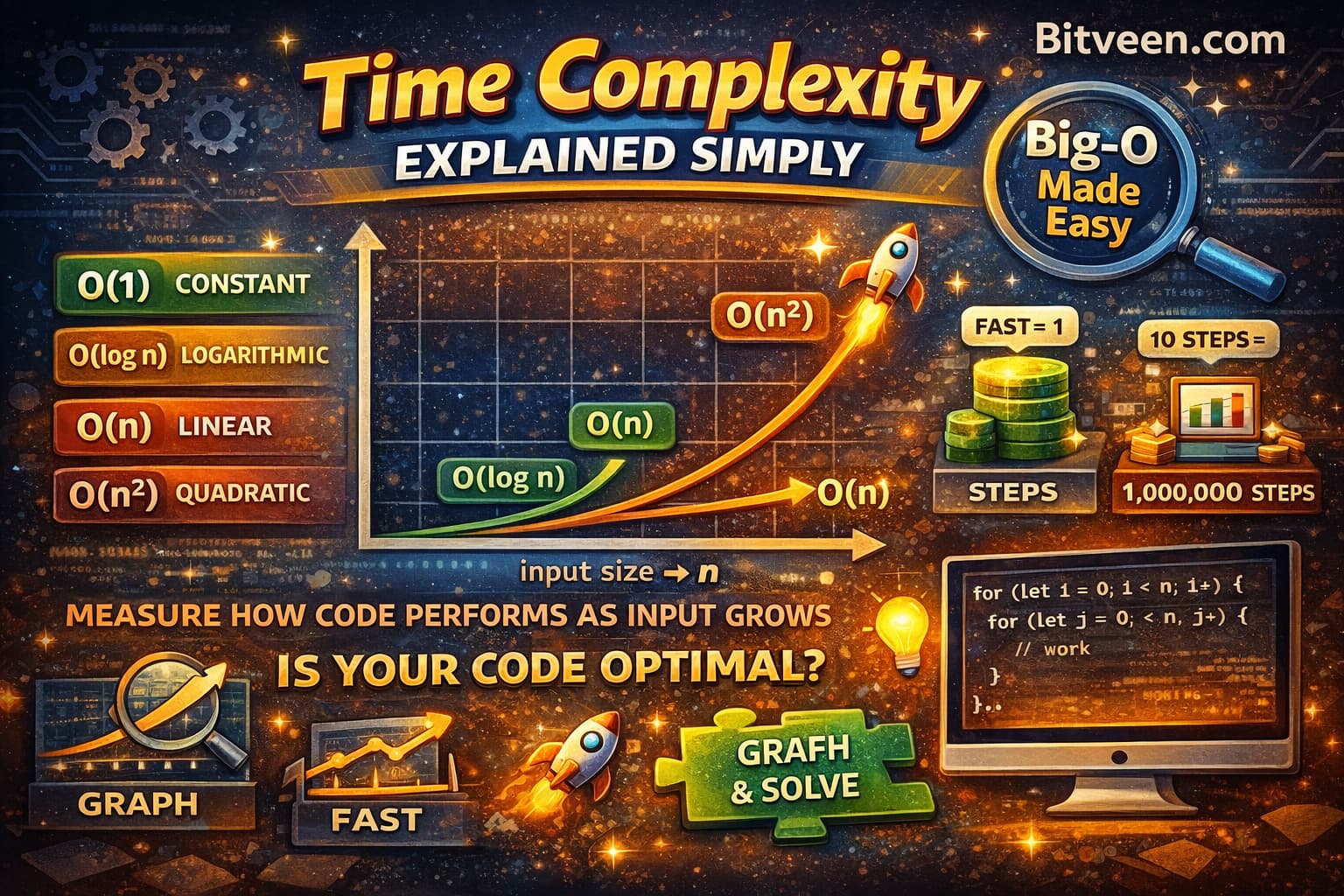

📊 Big-O Notation (Core Concept)

We represent time complexity using Big-O notation.

Let’s understand the most important ones 👇

🔹 O(1) — Constant Time

O(1)

Input: 10 → 1 step

Input: 1,000,000 → 1 step

👉 Example:

const arr = [10, 20, 30];

console.log(arr[1]); // direct access

🔹 O(n) — Linear Time

O(n)

Input: 10 → 10 steps

Input: 1000 → 1000 steps

👉 Example:

for (let i = 0; i < arr.length; i++) {

if (arr[i] === target) return i;

}

🔹 O(log n) — Logarithmic Time

O(\log n)

Input: 1000 → ~10 steps

👉 Idea: Reduce problem size by half each time

👉 Example: Binary Search

🔹 O(n²) — Quadratic Time

O(n^2)

Input: 10 → 100 steps

Input: 1000 → 1,000,000 steps 😨

👉 Example:

for (let i = 0; i < n; i++) {

for (let j = 0; j < n; j++) {

// work

}

}

📉 Visual Growth Comparison (Explained Inside)

Input Size (n) →

--------------------------------------------------------------

O(1)

→ ●────────●────────●────────●

same steps regardless of input (constant time)

O(log n)

→ ●───●────●─────●──────●

reduces problem size by half each step (Binary Search)

O(n)

→ ●────●────────●──────────●

checks every element one by one (Linear Search)

O(n²)

→ ●────────●──────────────●──────────────●

nested loops, comparisons grow very fast 🚀

⚡ One-Line Interpretation (add below diagram)

As input grows, O(1) stays flat, O(log n) grows slowly, O(n) grows steadily, and O(n²) grows very fast.

n = 10

O(1) → 1 step

O(log n) → ~3 steps

O(n) → 10 steps

O(n²) → 100 steps 😨

Input Size → small medium large

O(1) constant constant constant

O(log n) low low medium

O(n) low medium high

O(n²) low high very high 🚀

⚠️ Why This Matters

Let’s compare:

- O(n) → 1,000 steps

- O(n²) → 1,000,000 steps

👉 Same problem

👉 Massive difference in performance

🧠 Interview Insight

Interviewers don’t just check if your code works.

They ask:

- Can you optimize this?

- Can you reduce time complexity?

👉 A good solution = correct

👉 A great solution = efficient

❌ Common Mistakes

❌ Focusing only on output

Ignoring performance

❌ Not analyzing loops

Every loop impacts complexity

❌ Ignoring worst case

Always think worst-case scenario

🧩 Quick Practice

👉 What is the time complexity?

for (let i = 0; i < n; i++) {

console.log(i);

}

👉 Answer: O(n)

🚀 What’s Next?

Now that you understand performance…

👉 Next blog:

“Space Complexity Explained (Memory Matters Too)”

✍️ Closing Thought

“Writing code is easy. Writing efficient code is what makes you a great developer.”